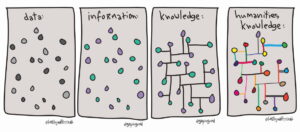

We understand knowledge construction to be social and combinatorial: we build on the knowledge of others, we create knowledge from data collected by ourselves and others, and so on. Although we pay a lot of attention to the processes behind the collection, recording, and archiving of our data, and are concerned about ensuring its findability, accessibility, interoperability, and reusability into the future, we pay much less attention to the technological mediation between ourselves and those same data. How do the search interfaces which we customarily employ in our archaeological data portals influence our use of them, and consequently affect the knowledge we create through them? How do they both enable and constrain us? And what are the implications for future interface designs?

As if to underline the lack of attention to interfaces, it’s often difficult to trace their history and development. It’s not something that infrastructure providers tend to be particularly interested in, and the Internet Archive’s Wayback Machine doesn’t capture interfaces which use dynamically scripted pages, which writes off the visual history of the first ten years or more of development of the Archaeology Data Service’s ArchSearch interface, for example. The focus is, perhaps inevitably, on maintaining the interfaces we do have and looking forward to developing the next ones, but with relatively little sense of their history. Interfaces are all too often treated as transparent, transient – almost disposable – windows on the data they provide access to.