As archaeologists, we frequently celebrate the diversity and messiness of archaeological data: its literal fragmentary nature, inevitable incompleteness, variable recovery and capture, multiple temporalities, and so on. However, the tools and technologies that we have developed to use and reuse that data do their best to disguise or remove that messiness. Most of the tools and technologies that we employ in the recording, location, analysis, and reuse of our data generally try to reduce its complexity. Of course, there is nothing new in this – by definition, we always tend to simplify in order to make data analysable. However, those technical structures assume that data are static things, whereas they are in reality highly volatile as they move from their initial creation through to subsequent reuse, and as we select elements from the data to address the particular research question in hand. This data instability is something we often lose sight of.

The illusion of data as a fixed, stable resource is a commonplace – or, if not specifically acknowledged, we often treat data as if this were the case. In that sense, we subscribe to Latour’s perspective of data as “immutable mobiles” (Latour 1986; see also Leonelli 2020, 6). Data travels, but it is not essentially changed by those travels. For instance, books are immutable mobiles, their immutability acquired through the printing of multiple identical copies, their mobility through their portability and the availability of copies etc. (Latour 1986, 11). The immutability of data is seen to give it its evidential status, while its mobility enables it to be taken and reused by others elsewhere. This perspective underlies, implicitly at least, much of our approach to digital archaeological data and underpins the data infrastructures that we have created over the years.

We’re accustomed to the fact that much archaeology is collaborative in nature: we work with and rely on the work of others all the time to achieve our archaeological ends. However, what we overlook is the way in which much of what we do as archaeologists is dependent upon invisible collaborators – people who are absent, distanced, even disinterested. And these aren’t archaeologists working remotely and accessing the same virtual research environment as us in real time, although some of them may be archaeologists who developed the specialist software we have chosen to use. The majority of these are people we will never know, cannot know, who themselves will be ignorant of the context in which we have chosen to apply their products, and indeed, to compound things, will generally be unaware of each other. They are, quite literally, the ghosts in the machine.

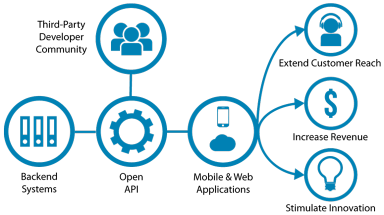

We’re accustomed to the fact that much archaeology is collaborative in nature: we work with and rely on the work of others all the time to achieve our archaeological ends. However, what we overlook is the way in which much of what we do as archaeologists is dependent upon invisible collaborators – people who are absent, distanced, even disinterested. And these aren’t archaeologists working remotely and accessing the same virtual research environment as us in real time, although some of them may be archaeologists who developed the specialist software we have chosen to use. The majority of these are people we will never know, cannot know, who themselves will be ignorant of the context in which we have chosen to apply their products, and indeed, to compound things, will generally be unaware of each other. They are, quite literally, the ghosts in the machine. We’re becoming increasingly accustomed to our digital technologies acting as gatekeepers – perhaps most obviously in the way that the smartphone acts as gatekeeper to our calendar and/or email. In fact, this technological gatekeeping functionality appears everywhere you look, whether it’s in the form of physical devices providing access to information, software interfaces providing access to tools, or web interfaces providing access to data, for example. A while ago, I mused about the way that archaeological data are increasingly made available via key gatekeepers, and that consequently “

We’re becoming increasingly accustomed to our digital technologies acting as gatekeepers – perhaps most obviously in the way that the smartphone acts as gatekeeper to our calendar and/or email. In fact, this technological gatekeeping functionality appears everywhere you look, whether it’s in the form of physical devices providing access to information, software interfaces providing access to tools, or web interfaces providing access to data, for example. A while ago, I mused about the way that archaeological data are increasingly made available via key gatekeepers, and that consequently “