In the face of the controversy surrounding Facebook/Cambridge Analytica, and in part as a response to the loss of trust in big tech companies (for example, Chakravorti 2018 and Yao 2018), there’s been some discussion which has sought to revisit the original ideals of the World Wide Web and hypertext. Anil Dash recently suggested:

the time is perfect to revisit a few of the overlooked gems from past eras. Perhaps modern versions of these concepts could be what helps us rebuild the web into something that has the potential, excitement, and openness that got so many of us excited about it in the first place.

That seems a rather forlorn hope, perhaps, but his revisiting of core concepts such as ‘View Source’, ‘Authoring’, and ‘Transclusion’ rang bells in my mind and led me to exhume a paper I gave back in 2004 in a session on Archaeology and the Electronic Word at the ‘Tartan TAG’ conference in Glasgow (amazingly the programme and abstracts, if not the website, are still available via https://www.antiquity.ac.uk/tag). At that time, I suggested that discussion of hypertext within archaeology had been relatively limited, especially in relation to issues such as access, power, communication and knowledge (which admittedly overlooked the contributions on digital publication in Internet Archaeology 6 (1999) for instance). This was despite the number of archaeological theorists who were enthusiastic proponents of hypertext in archaeology. For example, Ian Hodder wrote of enhanced participation and the erosion of hierarchical systems of archaeological knowledge together with the emergence of a different model of knowledge based on networks and flows – an environment in which “interactivity, multivocality and reflexivity are encouraged” (Hodder 1999a and see also Hodder 1999b, 117ff). Michael Shanks wrote of the benefits of collaborative writing in his Traumwerk wiki (no longer available) and the new insights that such activity can throw up through using an environment in which anyone could create and edit web pages on a particular topic, and add or alter the content of any contributions. Cornelius Holtorf published a number of papers discussing electronic scholarship and his experience in creating and publishing a hypermedia thesis (e.g. Holtorf 1999, 2001, 2004). In contrast, I proposed that there was a significant dislocation between the rhetoric and the reality – that what was actually being presented on our screens was masquerading as something which it was not and that consequently there might be a utopian or even a fetishisitic dialectic at work.

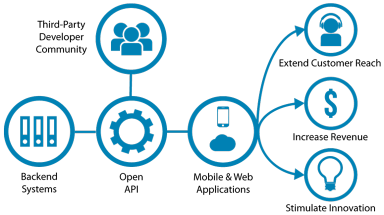

We’re becoming increasingly accustomed to our digital technologies acting as gatekeepers – perhaps most obviously in the way that the smartphone acts as gatekeeper to our calendar and/or email. In fact, this technological gatekeeping functionality appears everywhere you look, whether it’s in the form of physical devices providing access to information, software interfaces providing access to tools, or web interfaces providing access to data, for example. A while ago, I mused about the way that archaeological data are increasingly made available via key gatekeepers, and that consequently “

We’re becoming increasingly accustomed to our digital technologies acting as gatekeepers – perhaps most obviously in the way that the smartphone acts as gatekeeper to our calendar and/or email. In fact, this technological gatekeeping functionality appears everywhere you look, whether it’s in the form of physical devices providing access to information, software interfaces providing access to tools, or web interfaces providing access to data, for example. A while ago, I mused about the way that archaeological data are increasingly made available via key gatekeepers, and that consequently “